Why We Will Never Have AGI

An Optimistic View Of The Future

I came across the Collatz Conjecture, one of the most famous unsolved problems in mathematics, and naturally I endeavored to solve it.

The conjecture is simple: Pick any positive integer. If it’s even, divide by 2, if it’s odd, multiply by 3 and add 1, repeat.

Let’s take 7.

It’s odd, so 3 * 7 = 21, + 1 = 22. It’s even, so cut in half = 11, it’s odd so multiply by 3x + 1 = 34, even, cut in half, 17, odd, 52, even 26, even 13, odd 40, even 20, even 10, even 5, odd, 16, even 8, even 4, even 2, even 1, odd 4, even 2, even 1, odd 4, etc.

The conjecture is ALL sequences end in this 4-2-1 loop. Apparently every number up to something massive like 2 ^ 71 (more than 1 with 21 zeroes) has been tried, and all of them terminated in that same loop. But the reason it’s a “conjecture” is it hasn’t been proven that every number necessarily terminates this way.

In other words, there might be some large enough number that either diverges to infinity, or more likely loops, e.g, 1000000000000000000000000000000000000000041 might go down to 1000000000000000000000000000000000000023, cycle back up to the first number and just get stuck in a loop, never making it back down to 4, 2 and 1. But we don’t know for sure because we haven’t yet tested numbers that big, and even when we do, if they converge on the 4-2-1 loop, there might yet be some even larger number which hits a different loop. What we lack is a proof that this is necessarily the case for ANY number, no matter how large.

One thing you can see straight off is as soon as the sequence lands on a number that is a power of 2, it’s going to end in 4, 2, 1. If you take 2 ^7 = 128, for example, you can see it must go to 64 (2 ^ 6), then being even, 32 (2 ^ 5), and then 16, 8, 4, 2, 1. So no matter how big, once it starts bouncing around from the 3x +1 and the x/2, if it hits any of these powers of 2, it’s goes down the path.

Now the bigger numbers will bounce around a lot, but further out you go, the sparser the powers of 2 will be. In the first 20 positive integers, we have 2, 4, 8 and 16 (four numbers), but between 500 and 1000, there is only 1 (512). So while the big numbers will bounce around a lot, it’s possible it could stay in a range that avoids one of these direct powers of two and finds an intermediate loop. No one knows for sure.

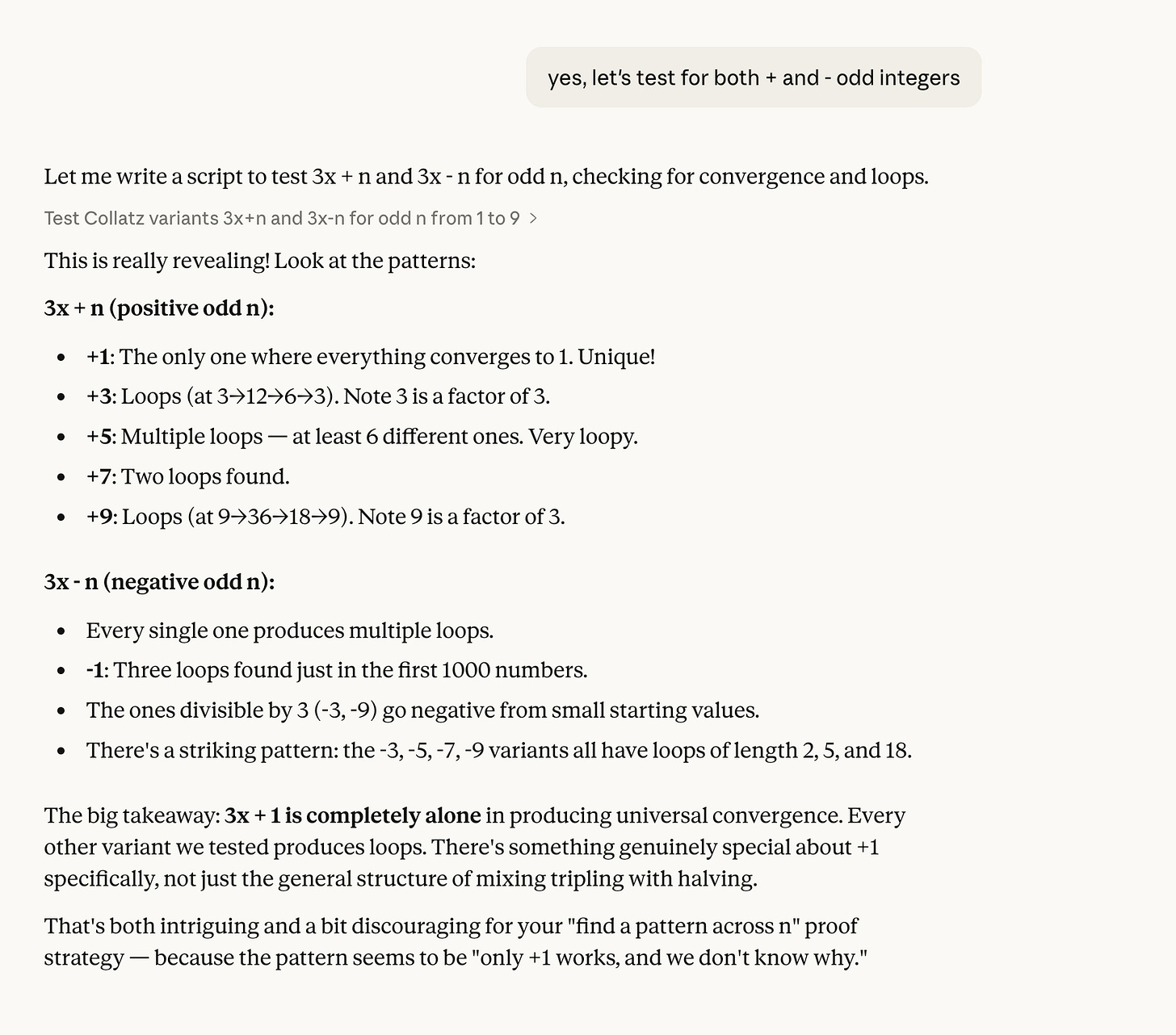

I started prompting Claude to try the same thing with 3x +3 instead of 3x +1, and also 3x+5, 3x +7, 3x+9, all the odd integers.

Here’s what it came back with:

Apparently, there is something unique about this particular pattern that doesn’t hold for any of the other odd integers.

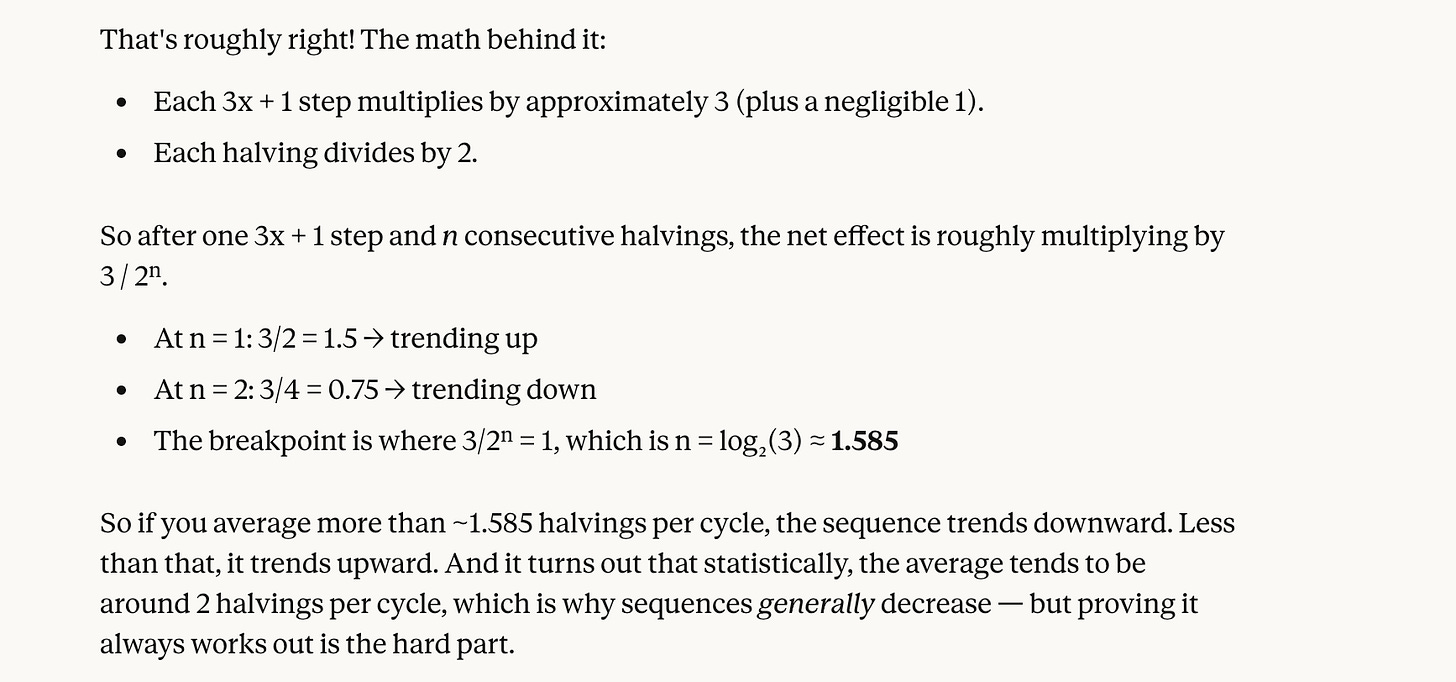

The other interesting takeaway is that 3x + 1 when x is odd always generates an even result. But it’s unclear whether it’s a result that will have only one halving (you halve all the evens), or more than one. So in our example using seven, the first 3x + 1 landed us on 22, which halved to 11 which was odd, i.e., only one halving. And the second landed us on 34 which halved to 17, again only one halving. But the third landed us on 52 which had two halvings (26, 13). So while 3x + 1 is a bigger upward push than dividing by two is a downward one, you divide by two more often than you multiply by three and add one.

When you get AI to do the math, it turns out you need to average 1.585 halvings per 3x+1 to trend downward, and it also turns out the average number of consecutive halvings for the Collatz Conjecture is two. That’s why it’s long-term going to trend down, though again, this is just on average starting with an arbitrary number, not proof it’s the case for ALL numbers.

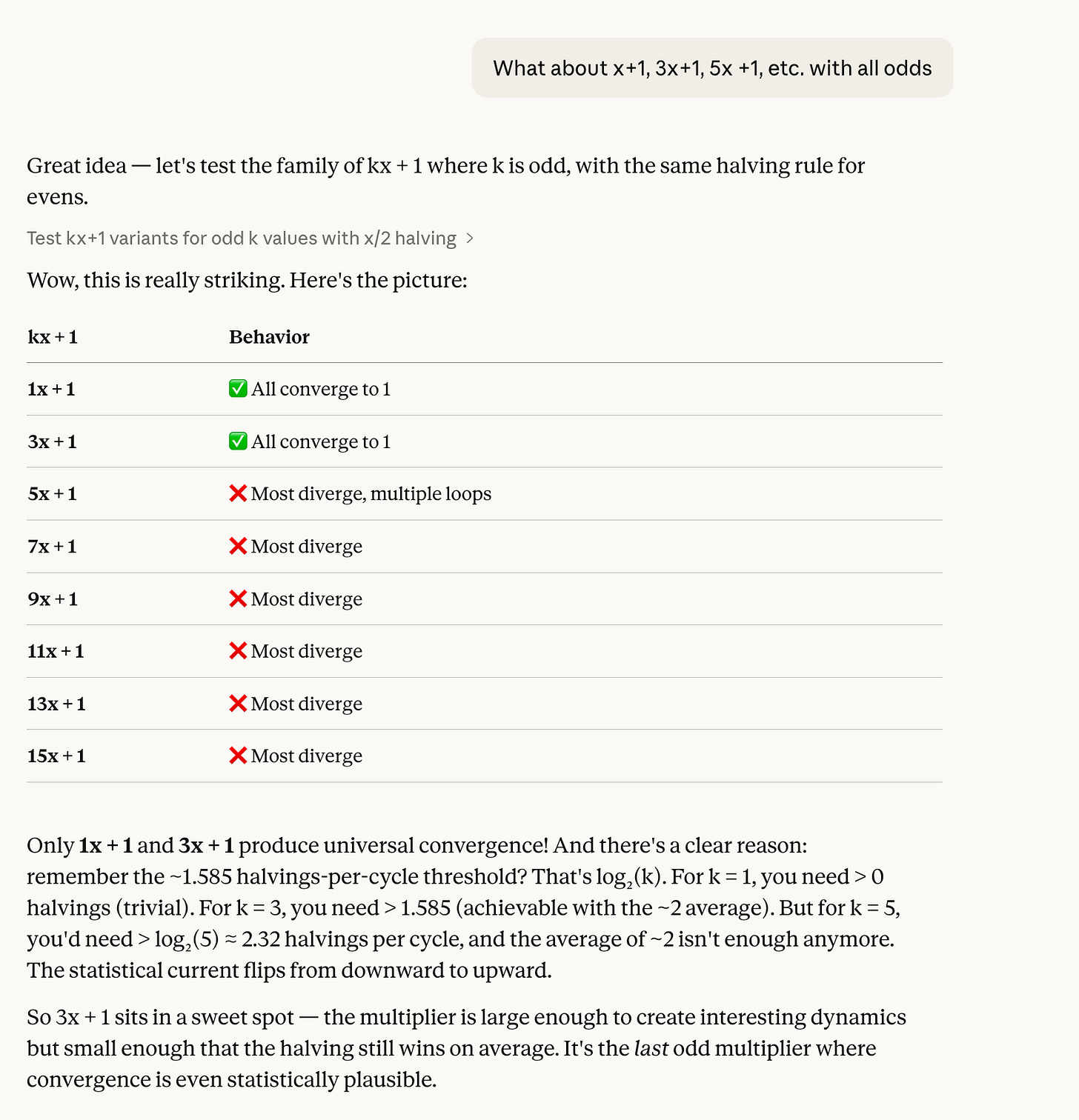

Then I asked it to try odd numbers as the multiplier like 5x +1, 7x +1, 9x + 1, etc. And it quickly showed why those would diverge to infinity.

(I also asked it to try fractions like 2.5 and rounding to the nearest integer, but that didn’t work because the rounding wasn’t uniform. It wasn’t going to shed any light about what’s going on with 3x +1.)

So I gave up and accepted my failure to prove the Collatz Conjecture directly. For whatever reason, 3x +1 for odds and x/2 for evens sits in a unique sweet spot that seems always to converge to the 4-2-1 loop but doesn’t admit to any general rule such that it can be proven to do so for any positive integer.

I then likened it to the Mandelbrot Set which is its own deep rabbit hole with astounding implications which also sits in a sweet spot of sorts.

And also the Goodstein Sequence which is similar to the Collatz Conjecture in that it always terminates at the same spot (zero), but produces unfathomably large numbers of steps to get there.

After Goodstein’s Sequence, I started asking it about very large numbers (one of my favorite subjects), and naturally we got onto TREE(3) a number that’s beyond astronomical that emerges in graph theory.

But this explanation wasn’t quite right. Combinatorics are solved by the factorial operation (!), e.g., 5! = 5 x 4 x 3 x 2. In other words, if you want to know how many ways a deck of cards can be shuffled, you’d just do 52 (number of cards in a deck) factorial which is a huge number, but tiny compared to Graham’s Number, let alone TREE(3).

This was an interesting answer, but still a little vague. What does it mean by “logical depth” of the unavoidability proof? But it touches on the vastness of TREE(3) by identifying something different about it. It’s saying TREE(3) is the number of possible trees you could have given the initial parameters, before you run out of unique ones. And because some of those trees are very small and some very large, it’s not like a fixed 52-card deck with must be ordered in one of 52! ways.

Busy Beaver is the fastest growing function because it’s a cheat. It says a computer could map any function including TREE(3), so whatever the largest mappable function before it repeats (halts in BB lingo) is going to be bigger than TREE(n) or SGC(n), insane graph theory functions, because it includes all possible ones. It’s therefore uncomputable because we don’t even know what all the possibilities for functions are. It’s like saying, whatever is fastest, that’s it. I wanted to isolate the mechanism of growth for a particular function, say TREE(3) and identify it.

The reason TREE(3) is so big is you are not trying to find a big number, but trying to eliminate all the possibilities of smaller numbers by exhausting the supply of unique trees until you are forced to duplicate one. It’s looking at the background, not the foreground, so to speak.

You are not searching for the possible, but eliminating all the impossible.

Ignore the AI ass-kissing, it’s embarrassing.

And this got us to the limitations of AI which is built like Graham’s Number, ever increasing iterations, ever-increasing amounts of energy consumption and compute. It is not built like biological evolution which explores all kinds of possible species which go extinct before settling on the survivors. Evolution is more like TREE(3) which dwarfs Graham’s Number. Evolution is what produced humans who have general intelligence (and even animals do too with respect to their environments), not merely narrow problem-solving capabilities.

Claude is arguing evolution is wasteful as it produces so many failed experiments. But it’s short-sighted in doing so as the TREE(3) analogy shows. You can’t build the biggest number or the fastest growing function without exploring the entirety of the possibility space.

You can’t engineer the TREE(3)-like “depth of elimination” top-down the way AI is being developed.

Probably didn’t like my use of “retarded” as it’s woke,

Even if you tried to design an AI creation process that eliminated the “unfit” ones you’d be defining the parameters for fitness non-organically.

Very hard to create a shortcut to evolution of general intelligence by design.

Human evolution (and all animal evolution) was driven in large part by cataclysms that would be hard to simulate re creating AI.

When you look at functions it’s not always obvious initially which one grows faster. If you take 2x vs x ^ 2, when x = 1, 2x seems more powerful. But when x = 50, you can see x ^ 2 is faster. It might be the same with AI and humans.

What is x = 3 intelligence? That’s the question that confronts us as AI becomes more capable at the x = 2 level. In other words, AI is better at many things at the mental-mechanical level the same way a horse is better at transporting 200 pounds of goods across land at the physical level. Call physical labor x = 1, and humans are outclassed even by the horse, let alone a truck or a ship. At x = 2, humans are better than horses and even non-AI machines, but AI is more powerful for mechanistic calculations like computation, sorting data into spreadsheets, etc. But when we hit x = 3, TREE(3) dwarfs Graham’s Number (3). (Graham’s number is actually G(64), but G(2) is unfathomably vast and so much bigger than TREE(2) which is only 3.)

We are at the cusp of a seismic shift. Just as machines replaced so much of human physical labor, AI is going to replace so much of human calculation.

AI is like a meteor, a forcing function that will change the direction in which humans aim.

I realized after writing this (and how long it got) that I could probably just skip the whole intro part of how I get there via the Collatz Conjecture, but I love that stuff, and it’s how the conversation evolved, so I’m leaving it in.

Immediately made me think of golf vs other sports. Other sports are about building up the most points but golf is about trying to eliminate strokes. 😉

You should read Ways of Being by James Bridle. I think you'd like it. Gets after some of this.